Why Machine Finance Demands Block-Level Data: Inside LYS Core

The Rise of Machine Finance

The capital markets of tomorrow will be dominated by machines. This trend of a machine-dominated market is not out of preference, but of sheer necessity. In equities, algorithmic systems already execute 60–75% of trading volume. As markets become tokenized and onchain, we believe that over 90% of capital market activity on chains like Solana will come from autonomous agents, arbitrage engines, liquidation bots, and other algorithmic systems.

But these machines don’t consume dashboards or CSV exports, instead, they demand machine-ready signals delivered at block speed, with context. To operate at scale, they need a clear line of sight into the onchain state. That is exactly what LYS Core is built to provide: structured, contextual block-level data that turns raw transparency into usable intelligence.

The Problem: Raw Data Is Too Noisy, Too Slow, Too Fragmented

Transparency ≠ Usability

Solana provides tremendous transparency. Every swap, transfer, liquidation, and program invocation is logged on-chain. But the raw logs are dense, fragmented, and bereft of context.

Consider what a bot must do if it relies purely on RPC data:

Download a block of raw logs (often thousands of events)

Decode and parse program instructions

Resolve wallet public keys and aliasing

Normalize token mints, token decimals, protocol versions

Reconstruct multi-hop swaps or liquidity flows

Filter noise, classify events (e.g. distinguishing a margin trade from a hedge)

This data-prep pipeline often consumes 70% or more of development and runtime overhead, meaning fewer cycles are spent on strategy and more on plumbing.

By the time a strategy has reconstructed a liquidation cascade or detected a cross-protocol arbitrage, the price may have moved, the arb closed, or the opportunity evaporated.

Latency Matters: Milliseconds Kill Alpha

Solana’s block/slot architecture has a target slot interval of ~400 ms (i.e., roughly 400 ms per potential block). Actual block production may vary, but this is the rhythm. In that interval, multiple opportunities can emerge and vanish.

If a real-time strategy is delayed just 100–200 ms parsing and structuring, it often arrives too late. Especially in liquidation cascades or narrow arbitrage spreads, latency compounds into missed alpha.

Furthermore, research on RPC infrastructure shows that nodes (especially public RPC providers) can introduce 100–200 ms or more of lag in propagation or query response. For example, an RPC node may only see a transaction 120 ms before or after another node, even if both broadcast simultaneously.

Thus, relying on raw RPC + post-hoc parsing is structurally brittle for any high-frequency or competitive trading strategy.

The LYS Core Solution: Real-Time Structured Streams

What Core Does

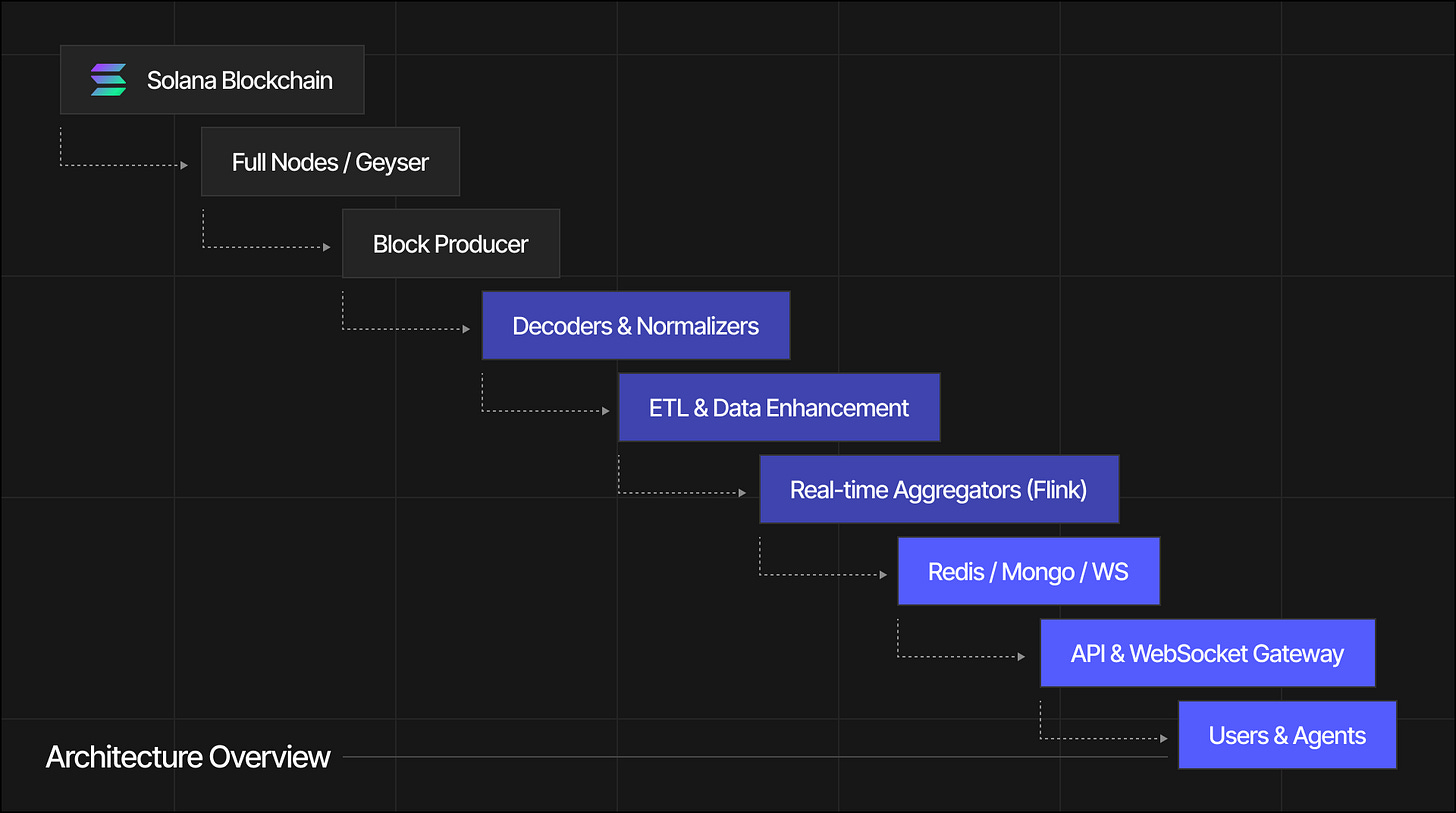

LYS Core operates at the block-level. As each block finalizes, Core:

Parses all on-chain logs and events

Normalizes entities (wallets, tokens, protocols)

Links related instructions into coherent flows (e.g. multi-hop swaps, liquidity migrations)

Labels and classifies events (e.g. liquidations, strategic rebalancing)

Emits structured, contextual streams with millisecond latency

In effect, Core transforms raw logs into signal-level primitives. Rather than delivering “Program X instruction on account Y,” it outputs:

“Liquidation at lending pool A: borrower B’s collateral moved, debt repaid, slippage amount - counterparty C”

“Multi-hop swap path [Token1 → Token2 → Token3] executed by wallet W, with net amounts and fees”

“Wallet W’s aggregated exposure changed by –5 % across tokens T1, T2”

These primitives are directly consumable by strategy systems or execution engines; no further cleaning or decoding is required.

Latency & Performance

Our current benchmarks (internal) aim for ~14 ms feed latency from block finality to structured output. (Note: this is a realistic target; actual performance depends on chain load, compute complexity, and protocol coverage.)

By contrast, custom pipelines built on top of RPC often take 100–300 ms or more to parse, normalize, and reconstruct flows, which is far too slow for HFT-style strategies.

Integrity and Coverage

Core is protocol-agnostic but extensible. It continuously integrates new protocols and versions. When a new DEX version or liquidity pool emerges, Core’s parser updates to maintain coverage.

Additionally, Core offers backfill and catch-up modes so agents joining late can hydrate full state and then transition to streaming mode.

Use Case: Arbitrage Engine

Consider an arbitrage system monitoring two liquidity pools say, Pool A and Pool B for cross-pool price divergences.

Without Core (RPC-based approach):

Poll raw swap logs from each pool via RPC

Parse token accounts, resolve decimals

Detect candidate paths

Reconstruct multi-hop paths

Evaluate spread

Submit a trade

This pipeline, especially reconstruction and normalization, can take hundreds of milliseconds. By the time it finishes, the arb may no longer exist.

With Core:

Core emits a structured “arbitrage candidate” event immediately after block finality

Strategy logic computes the spread and submits via LYS Flash

Execution happens in the same or next block

This reduces decision latency dramatically and allows strategies to be responsive at the block boundary.

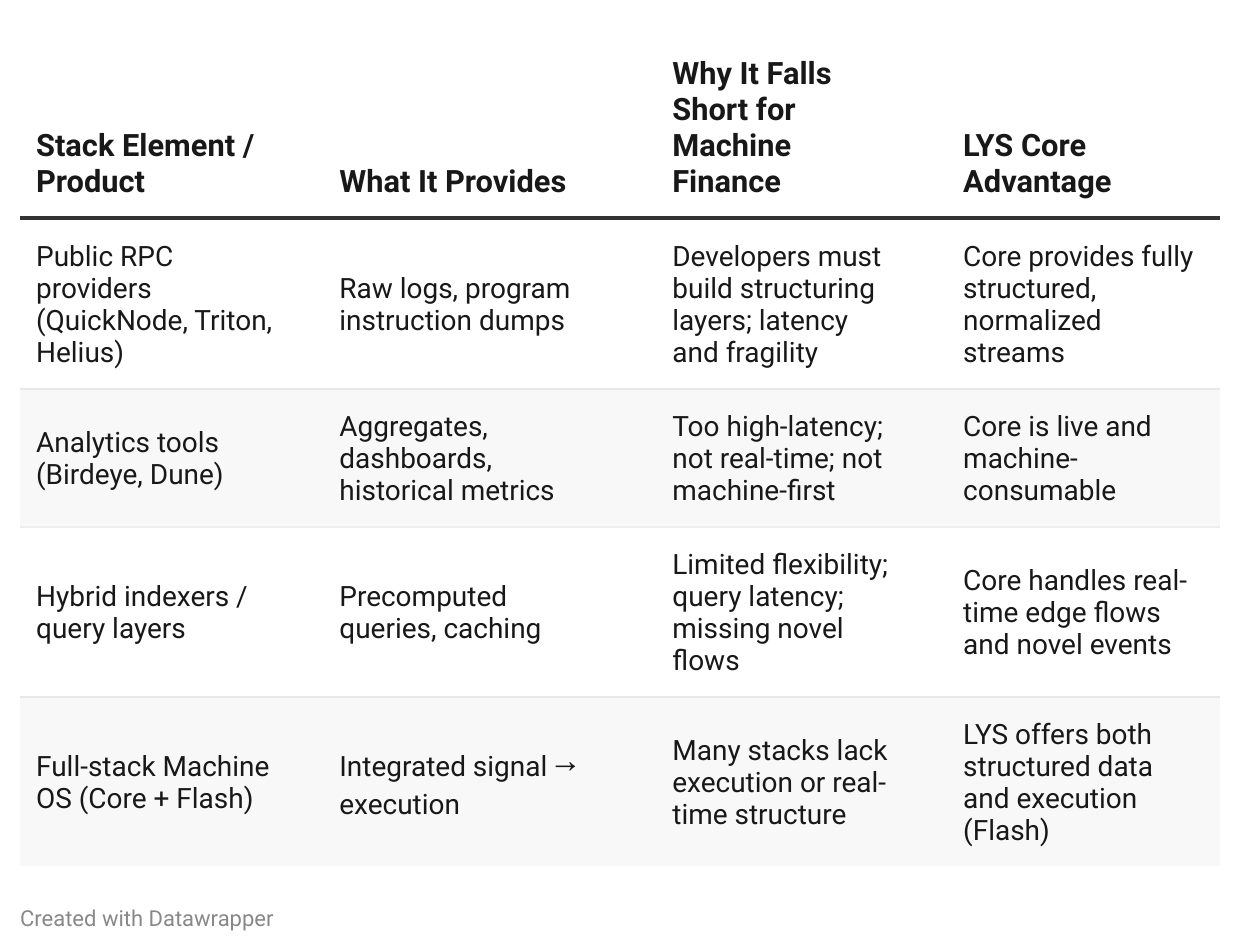

Comparison to Alternatives

Many players provide data or analytics, but none deliver structured, low-latency signals built for agents.

Risks, Challenges & Mitigations

Protocol Drift: New DEX versions or program upgrades may break parsers.

Mitigation: fast parser deployment pipeline, protocol abstraction layers, schema versioning.

Load Scaling: Under high network load, compute required to parse and rebuild flows may spike.

Mitigation: horizontal scaling, incremental updates, partial event filtering, compute sharding.

Edge cases & Unknown Programs: Some on-chain interactions may not map cleanly into known flow types.

Mitigation: fallback “untyped” event streams, developer extension APIs, human-in-the-loop protocol annotation.

Agreement on Standards: Differences in how protocols label token decimals, LP shares, etc., can cause normalization challenges.

Mitigation: canonical token registry, cross-protocol mapping, community collaboration on standards.

Why Core Is Foundational

LYS Core is more than just a data feed. It is the eyes of the Machine OS. Without precise, contextual vision, autonomous strategies are blind. Execution engines, even the best, need structured input to operate reliably.

As the next cycle of onchain capital markets matures, raw transparency will no longer suffice. Success will go to those with machine-native clarity, where signals arrive at block speed and strategies can act immediately.

LYS Core is designed to be that clarity.

Follow us on Twitter: https://x.com/LYS_Labs

Join our community on Discord: https://discord.gg/9FMHKfx3qp

Use the LYS stack and get your LYS API Keys on our Developer Portal: https://lyslabs.ai/sandbox